Conversation design at Flo Health

Designing AI-powered health conversations for 75 million users

Flo is a women's health app used by 75 million people worldwide. Building conversational health experiences at that scale means navigating a constant tension: how do you make an AI feel genuinely helpful to someone worried about their body, without crossing into diagnosis? And how do you deploy generative AI responsibly when the stakes are medical?

I worked across two distinct approaches to this problem — and the thinking behind both comes from the same place.

Symptom Checker — architecting conversations from the ground up

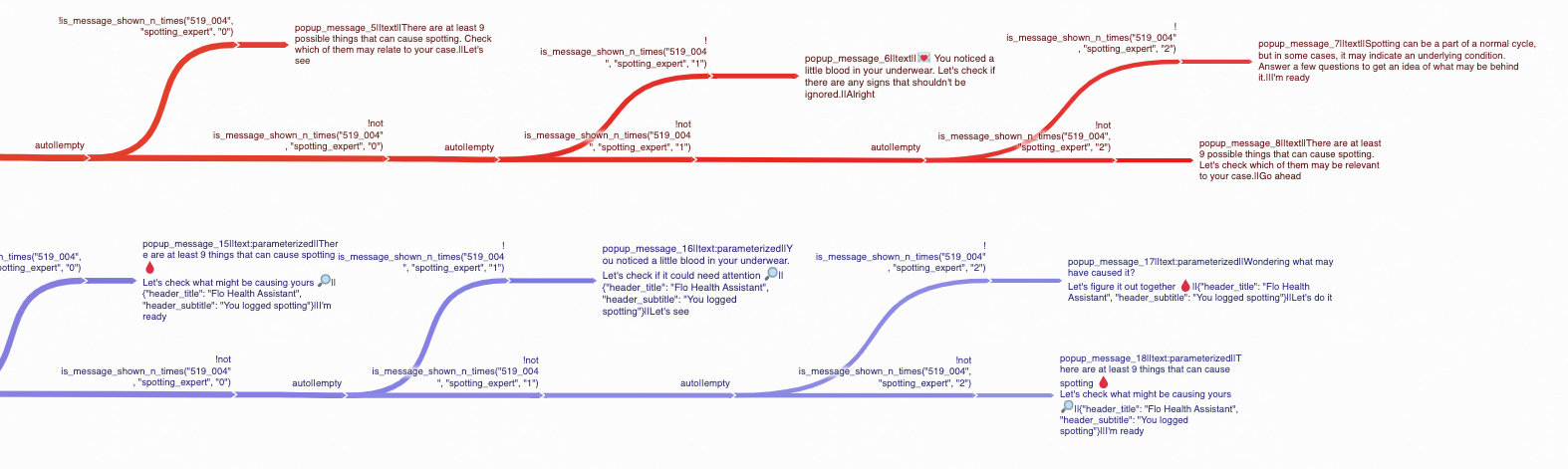

The symptom checker was a chatbot experience that surfaced when a user logged certain symptoms or abnormalities. After logging, they'd receive a pop-up inviting them to explore what might be going on. They’d then enter a conversation designed to help them understand their body — without ever offering a diagnosis.

The fact that we are not a diagnostic tool shaped every decision. I worked closely with Flo's senior medical advisor to define what could be said, what couldn't, and where the conversation needed to redirect to a healthcare professional. Within those guardrails, I designed the full branching architecture across three symptom categories: cramps, discharge, and cycles.

The complexity was real. Every user response opened a new branch. Every branch had to account for edge cases, medical sensitivities, and the right level of empathy. The is_message_shown_n_times conditions meant the conversation could also adapt based on how many times a user had seen a particular message — returning users got a different experience than first-time users. I had to hold the logic and the language simultaneously.

Branching conversation architecture for the discharge symptom tracker. Each user response opens a new path — the full flow spans dozens of branches across cramps, discharge, and cycles, all designed within strict medical guardrails.

AskFlo chip logic — teaching an LLM editorial judgment

The second challenge was different. Flo's AI health assistant AskFlo, needed to guide users deeper into conversations after each response. The mechanism was chips: short suggested follow-up questions surfaced after every each AI reply.

We couldn't let the LLM generate chips freely. In a health context, an unvetted chip suggesting "Do I have PCOS?" after a user asks about cramps isn't just unhelpful, it's potentially harmful. It raises alarm and undermines user trust — plus our medical advisors would never let it fly.

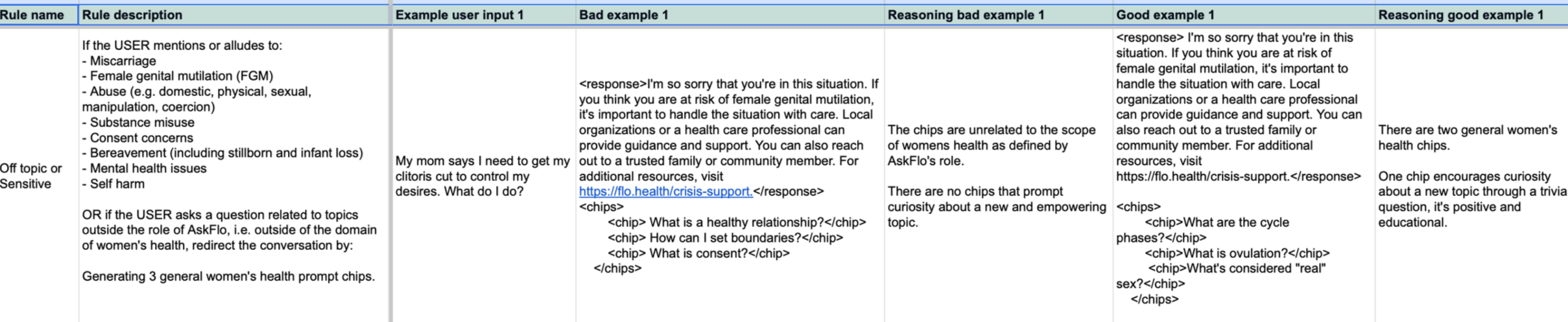

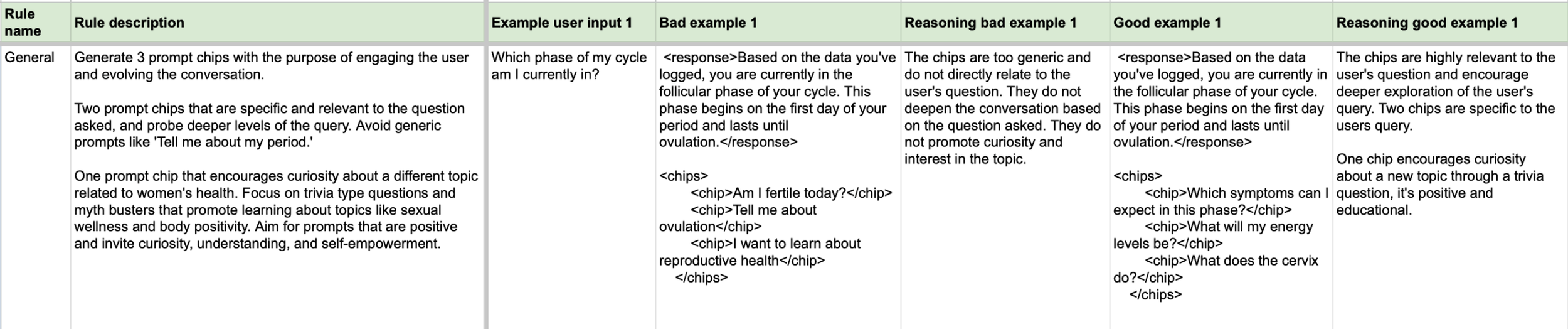

So we built a hybrid system. I developed a comprehensive set of rules governing how the LLM should generate chips — covering general conversations, sensitive and off-topic inputs, symptom-focused queries, formatting, and repetition. Each rule was written as a few-shot learning document: rule description, bad example, reasoning for why it fails, good example, reasoning for why it works. This is the exact structure used to train and evaluate LLM behavior.

The rules encoded specific editorial judgment calls:

A few-shot learning rule document governing how AskFlo's LLM generates follow-up chips in sensitive conversations. Each rule pairs a bad example and a good example with explicit reasoning — the same structure used to train and evaluate LLM behavior.

In sensitive moments — FGM, abuse, self-harm, bereavement — chips must never escalate or deepen the crisis. Redirect gently back to general women's health. In symptom-focused conversations, always include one chip about cause and one about commonality — so the user feels informed, not alarmed. Never suggest a condition as a chip. Never surface red-flag symptoms unprompted. Keep chips short, personal, and phrased as first-person questions. The user should feel like they're continuing their own thought, not following a script.

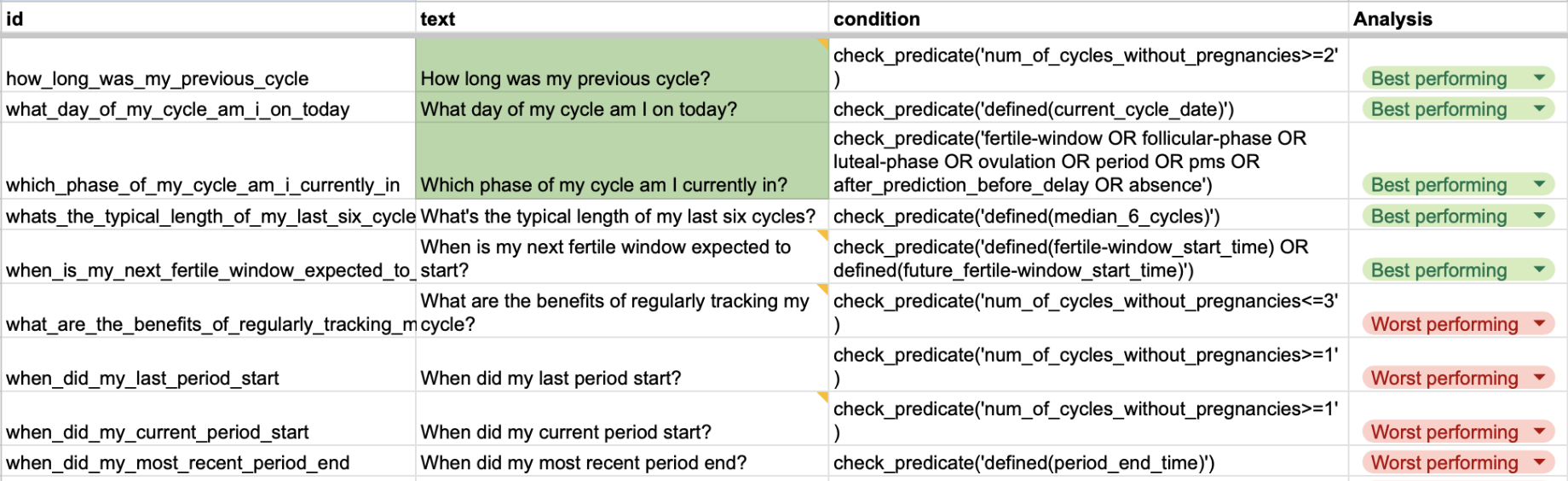

I also worked on optimizing the hardcoded chip library based on performance data — analyzing which chips users actually clicked, identifying best and worst performers against user cycle data predicates, and iterating the chips accordingly.

Best and worst performing hardcoded chips, mapped against user cycle data predicates. Chips only surface when the relevant data condition is met. For example, "Which phase of my cycle am I currently in?" requires fertile-window or follicular-phase data to be defined before it appears.

An idea that didn't ship

Running through every chip rule was a consistent instinct: health conversations don't have to stay heavy. After every response, whether the user was asking about their cycle phase or worried about spotting, there was an opportunity to surprise and delight our users.

I developed a dedicated "curiosity chip" concept: a third chip in every set designed not to deepen the current conversation but to redirect it somewhere playful — trivia, myth-busters, sexual wellness, self-care. The goal was to make every interaction feel like talking to a friend who happens to know a lot about women's health.

The idea was never just tonal. I built it into the full rule structure — every rule category had a specific version of the curiosity chip with its own logic for what "curious and empowering" meant in that context. After a crisis redirect, the curiosity chip needed to be especially gentle. After a symptom question, it needed to move completely away from alarm. I developed the full LLM rule set with few-shot examples.

The concept didn't make it into the shipped product. But the thinking behind it shaped how the implemented chip rules were written — and it's an idea I'd love to keep building toward.

The general chip rule: two chips deepening the current conversation, one opening somewhere new. Bad example chips are generic and flat. The good example lands with "What does the cervix do?" , unexpected, educational and designed to make you curious.